Introduction

THE_OPER& is a performance developed between collaborators at Duke University that ran for five nights in January 2018. Collaborators included media artist Bill Seaman, composer John Supko, director Jim Findlay, performers from the Lorelei Ensemble, and visual artist Keith Scretch. THE_OPER& was an algorithmic, performative piece that speculated on future intelligence. As the machine learning collaborator and researcher, I was interested in creating an appearance of exploratory thought patterns that would engage the audience in speculation about future "thought systems" and cybernetics.

Performance Abstract

Is technology making or breaking our world? That question is central to THE_OPER&, a bold new opera developed and premiered at Duke University that uses the high-drama framework of opera and advanced technology to explore ideas of apocalypse, renewal, and survival in the modern age. During each performance, a computer system preloaded with video, sound, and poetic text fragments generates an original world, specific to the room and audience. That world eventually cedes to entropy, disintegrating from disaster and destruction until it falls into chaos, only to be rebuilt. The cycle repeats. A voice — the system's — narrates the action, expressing the computer's consciousness as a chorus of voices responds to the changing environment. The score moves from minimal and ambient to complex, industrial textures, a soundscape linked to the rise and fall and rise of the world within the room.

Development

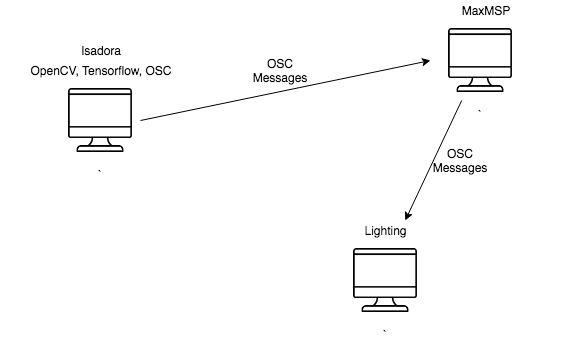

The system developed for THE_OPER& married several software platforms and custom code to create the algorithmic and machine learning aspects of the piece. The setup consisted of three computers communicating via Open Sound Control (OSC) messages, triggering events and passing data and logic between platforms. One computer ran Isadora for visual output and Keras for machine learning, another ran MaxMSP for the compositions, and the third controlled lighting. The system was designed so that a single computer could initiate the piece and run the entire two-hour performance without intervention from any technicians.

Machine Learning

Development of the machine learning component went through several iterations. Ultimately, we settled on a pared-down version of our initial approaches that, while technically simple, produced the most compelling and thought-provoking output.

The concept was to give the impression that the system performing the piece was learning over time. In the first iteration, I developed a model to classify images by fine-tuning VGG16 on the images displayed throughout the performance. The category labels were then overlaid on each image in real time as it appeared. This produced accurate but visually uninteresting output — images of boats displayed with the label "boat," mountains with "mountain," and so on. There was little room for discovery, play, or imagination.

Ultimately, we chose a direction that opened the viewer to the possibility that the system might have the capacity to reason and learn over time. By using the raw VGG16 model and outputting classification probabilities across a wide array of categories, I developed a system that allowed for a broader visual and conceptual exploration. Rather than a single confident label, the audience saw the system "considering" multiple interpretations of each image, with probabilities shifting and competing — a representation of something closer to associative thought than rote classification.